What We Do

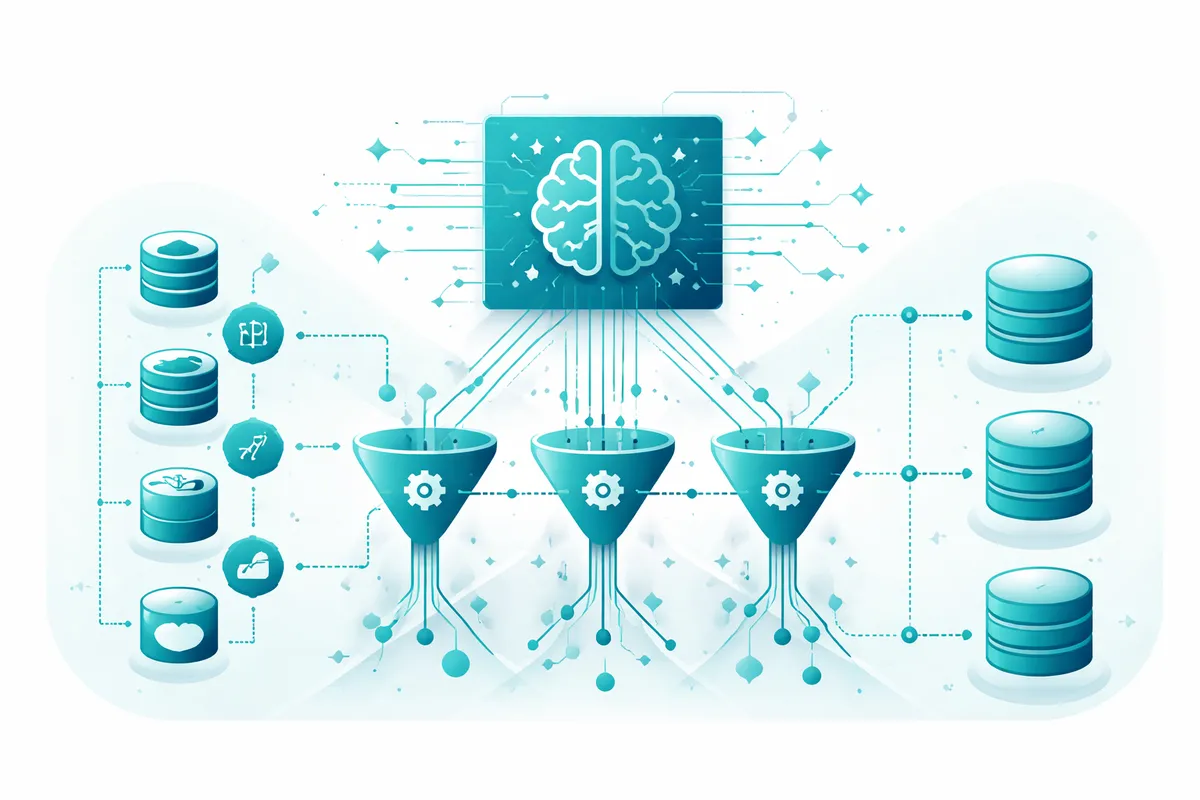

The most sophisticated AI model produces unreliable outputs when the data feeding it is inconsistent, delayed, or poorly structured. Data pipelines are the infrastructure that determines whether your AI investments work or fail. We build automated pipelines that extract data from every source your business uses, apply transformation and cleaning logic that enforces quality standards, and load it into your AI systems, analytics platforms, or data warehouses on the schedule and latency your use case requires.

Real-time for operational AI. Batch for analytical AI. The right architecture for what you are actually building.

How We Work

We start with a source audit: every system that generates data relevant to your AI or analytics use cases, its data format, its update frequency, and its access method. That audit produces a pipeline architecture document that maps every data flow from source to destination. Build begins with the extraction layer, connecting to databases, APIs, file systems, and streaming sources. Transformation logic is then implemented: field mapping, type normalization, deduplication, enrichment, and quality validation rules.

Load targets are configured with appropriate schemas. Monitoring is built from day one: failed job alerts, data quality anomaly detection, schema change detection, and throughput dashboards. When something breaks, the right person knows immediately with enough context to resolve it quickly.