How We Build NLP Solutions for Rogers Park

NLP solution design begins with clear problem definition. The most common failure mode in NLP projects is building a system optimized for a problem that is slightly different from the problem the organization actually has. We invest time upfront to understand exactly what text data exists, what questions the organization needs to answer with that data, and what actions the analysis should enable. A clear problem definition prevents building technically sophisticated solutions that do not solve the actual organizational problem.

Data assessment evaluates the text data that will be processed by the NLP system. For Rogers Park organizations with multilingual document collections, this assessment includes language identification across the corpus, assessment of text quality (scanned documents with OCR errors, abbreviated case note conventions, informal language in community surveys), and evaluation of the specific NLP task feasibility for the data available. Some NLP applications require large volumes of labeled training data. Others work effectively with pre-trained models that require little or no additional training. The data assessment determines which approach is appropriate.

Multilingual NLP design requires explicit choices about which languages to support and at what capability level. Current large language models provide strong multilingual capability for many languages, including Spanish, French, German, Arabic, and Chinese. Capability for less commonly supported languages, including some of the African and Southeast Asian languages spoken in Rogers Park, varies by task and model. We are honest about capability levels for specific languages and design systems that degrade gracefully for lower-resource languages rather than silently producing poor-quality outputs.

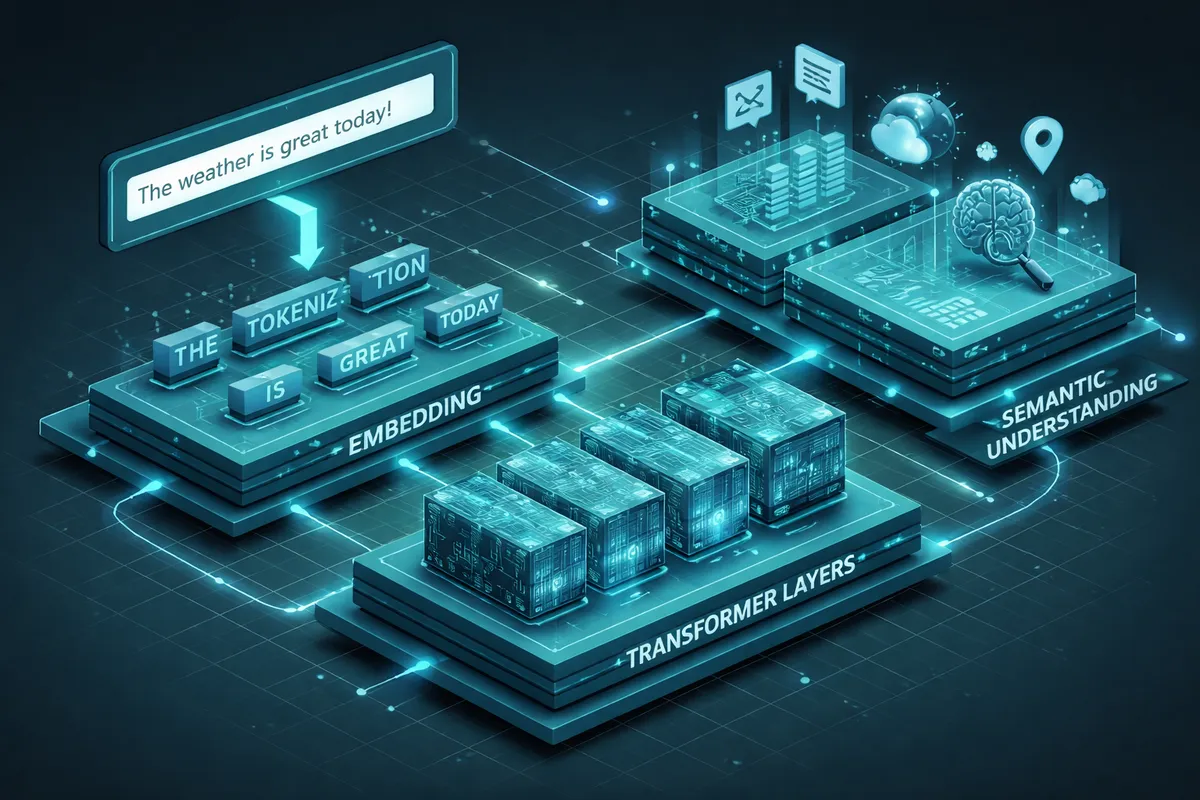

Model selection and architecture choices reflect the specific task requirements. Text classification, named entity recognition, information extraction, semantic search, and document summarization each have different model architectures and fine-tuning requirements. For Rogers Park organizations that are not AI specialists, we make these choices with clear explanations of the tradeoffs rather than jargon-heavy technical justifications.

Industries We Serve in Rogers Park

Nonprofits and social service organizations use NLP for case note analysis, community survey synthesis, grant and funder research, program outcome reporting from unstructured text data, and the document classification tasks that currently require manual sorting and routing.

Healthcare and health services organizations including Howard Brown Health use NLP for clinical documentation analysis, patient feedback synthesis, population health text analytics, and the structured information extraction from unstructured health records that enables clinical quality improvement.

Educational and research organizations including Loyola University Chicago's academic departments use NLP for literature review automation, research document analysis, student feedback synthesis, and the text-intensive research support tasks that NLP tools handle at scale.

Community organizing and advocacy organizations use NLP for policy document analysis, media monitoring, community survey synthesis, and the systematic text analysis that supports evidence-based advocacy strategies.

Independent businesses with sufficient text data use NLP for customer review analysis, competitive intelligence from web content, sales conversation analysis, and the customer feedback synthesis that improves product and service decisions.

What to Expect Working With Us

1. Problem definition and data assessment. We work with your team to define the specific NLP problem clearly, assess the text data available, evaluate feasibility for the required languages and text types, and establish the evaluation criteria for what success looks like.

2. Solution design and model selection. We design the NLP pipeline, select appropriate pre-trained models or determine where custom fine-tuning is required, and document the system architecture before development begins.

3. Development, testing, and calibration. We build the NLP system and test it with representative samples from your actual data, including the edge cases that reveal where the system performs less reliably. We calibrate the system's confidence thresholds and routing logic based on test performance.

4. Deployment and evaluation framework. We deploy the system with monitoring infrastructure and establish an ongoing evaluation framework that tracks performance over time, because NLP systems can drift as the language and document types they process evolve.