How We Build AI Data Pipelines for Chinatown

Data pipeline development for Chinatown businesses begins with a systems inventory: documenting every system the business uses that generates or stores data, assessing data quality in each system, and identifying integration points that would produce the most valuable business intelligence. Most Chinatown family businesses have more data in their existing systems than they are currently using; the pipeline work is often about making existing data accessible rather than generating new data.

We then design the integration architecture that connects those systems in the ways that serve the business's most important decision-making needs. For a Wentworth Avenue restaurant, the highest-value integration might be connecting reservation data to email marketing so the business can target communication by visit frequency and occasion type. For an import business on Archer Avenue, the highest-value integration might be connecting purchase order data to sales data to create a demand forecasting model that prevents both stockouts and overstock on the specialty products that drive the business's margins.

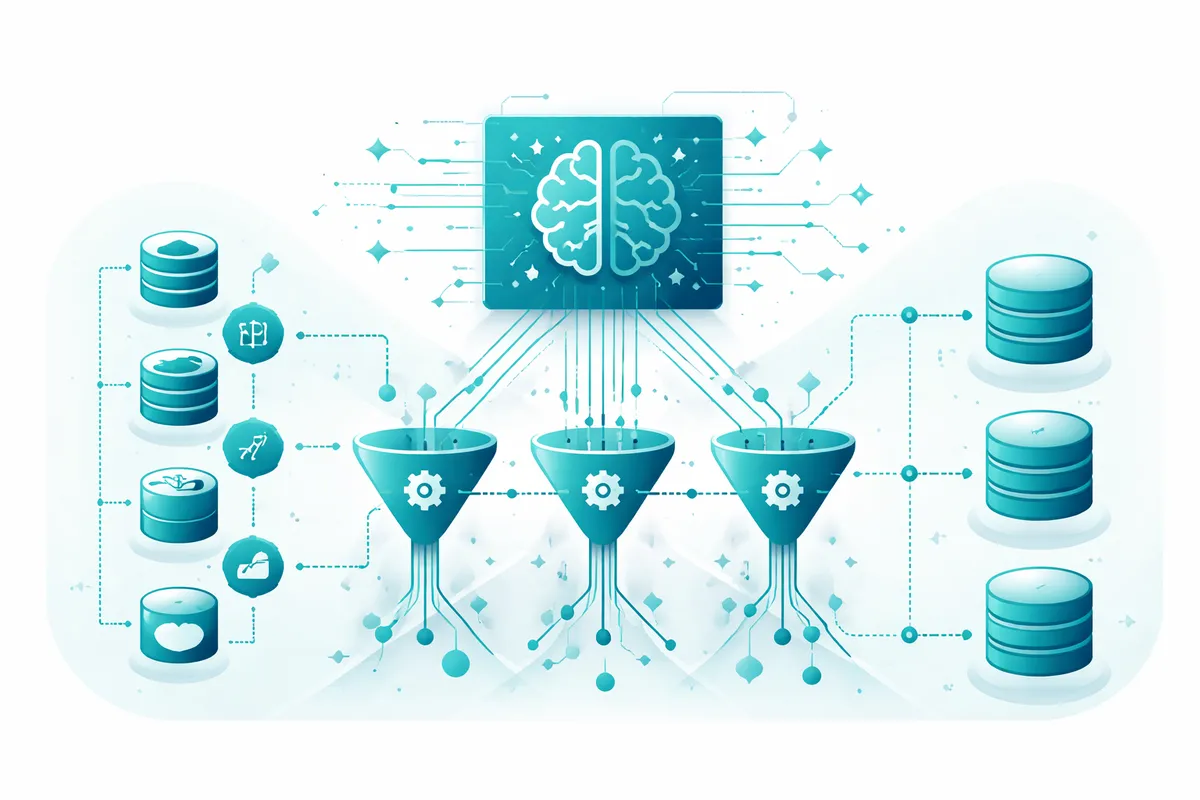

AI-enhanced data pipelines go beyond simple data transfer by applying machine learning to the data that flows through them. An AI pipeline processing customer data does not just move records from one system to another; it identifies patterns in customer behavior, flags anomalies suggesting data quality issues, and generates predictions and recommendations that make business intelligence actionable rather than merely visible.

Bilingual data handling is a specific requirement for Chinatown businesses whose records include data entered in both English and Chinese. Data systems that cannot handle Chinese character sets will corrupt records entered in Mandarin or Cantonese. We configure pipeline infrastructure that handles multilingual data correctly, preserving the integrity of records regardless of the language in which they were entered.

Industries We Serve in Chinatown

Restaurants and food businesses on Wentworth Avenue and Cermak Road manage reservations, POS sales data, supplier invoices, and marketing platform data in separate systems. We build data pipelines connecting these systems to give restaurant operators a complete view of customer behavior, sales performance by dish and daypart, and the marketing effectiveness that informs investment decisions around the Chinese cultural calendar.

Import retailers and specialty food businesses at Chinatown Square and along Archer Avenue manage inventory, supplier relationships, purchase orders, and sales data across multiple systems. We build data pipelines connecting inventory to sales to supplier lead times, creating the operational intelligence that prevents stockouts during peak demand periods around Lunar New Year and Mid-Autumn Festival and the over-investment in slow-moving inventory that consumes working capital.

Herbal medicine and traditional health practices on Princeton Avenue manage patient records, appointment histories, and treatment protocols in systems that do not automatically connect to communication platforms or outcome tracking tools. We build data pipelines giving practitioners a complete view of patient engagement, the communication patterns supporting treatment adherence, and the operational metrics informing practice capacity planning.

Bakeries and specialty food producers in Chinatown Square and along 22nd Place manage production scheduling, ingredient inventory, custom order management, and retail sales in systems reflecting the different operational phases of their businesses. We build data pipelines connecting production to inventory to sales, reducing the ingredient waste that occurs when production is not calibrated to actual demand.

Cultural institutions and community organizations at the Pui Tak Center and the Chinese American Museum of Chicago manage donor records, program participants, volunteers, and grant reporting in separate systems. We build data pipelines connecting these records to give institution staff a complete view of community engagement, donor relationship health, and the program performance data supporting grant applications and board reporting.

Service businesses and professional practices serving Chinatown's community manage client records, appointment histories, billing data, and communication logs in systems that do not automatically share information. We build data pipelines giving service business operators a complete view of client relationships, service utilization patterns informing capacity planning, and the billing and revenue data supporting business management decisions.

What to Expect Working With Us

1. Systems inventory and data assessment. We document every data-generating system the business uses, assess the quality and completeness of data in each system, and identify the integration opportunities that would produce the most valuable business intelligence. For Chinatown businesses, this assessment includes evaluation of how existing systems handle multilingual data and whether records entered in Chinese character sets are stored correctly.

2. Integration architecture design. We design the pipeline architecture connecting the business's systems in the ways that serve its most important decisions, specifying the data flows, transformation rules that standardize data across systems, and AI enhancements adding predictive capability to the pipeline. The architecture is designed around the business's actual decision-making needs rather than technical elegance.

3. Pipeline development and integration. We build the pipeline infrastructure, connect it to the business's existing systems through APIs or data exports, and validate the data flows before the pipeline goes live with production data. Validation for Chinatown businesses includes testing with actual data the business uses, including records in multiple languages, to confirm the pipeline handles its specific data accurately.

4. Dashboard development and team training. We build the reporting interfaces making the pipeline's output accessible to the business's operators, train the team on how to use those interfaces, and provide documentation supporting ongoing use without requiring continuous technical support. Data pipelines that produce reports nobody knows how to interpret do not improve business decision-making; we prioritize usability alongside technical reliability.